Google has been very aggressive about sending out warnings in Google Search Console that says that your site is blocking CSS and JavaScript files on your site. Today I received a warning message from Google regarding my site’s blocking of CSS and JavaScript files. But when I first looked at the message, I thought that I wasn’t actually blocking any .CSS or .JS files on my site. Until I looked a little bit further.

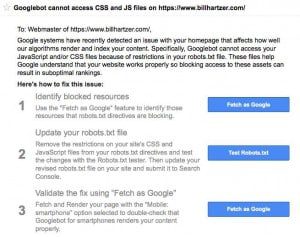

Here’s the message I received, July 28, 2015 from Google. Apparently it was so important that Google sent it via the Search Console (formerly Google Webmaster Tools) but they also sent it via email:

Googlebot cannot access CSS and JS files on https://www.billhartzer.com/

July 28, 2015To: Webmaster of https://www.billhartzer.com/,

Google systems have recently detected an issue with your homepage that affects how well our algorithms render and index your content. Specifically, Googlebot cannot access your JavaScript and/or CSS files because of restrictions in your robots.txt file. These files help Google understand that your website works properly so blocking access to these assets can result in suboptimal rankings.

Here’s how to fix this issue:

Identify blocked resources

Use the “Fetch as Google” feature to identify those resources that robots.txt directives are blocking.

Fetch as Google

Update your robots.txt file

Remove the restrictions on your site’s CSS and JavaScript files from your robots.txt directives and test the changes with the Robots.txt tester. Then update your revised robots.txt file on your site and submit it to Search Console.

Test Robots.txt

Validate the fix using “Fetch as Google”

Fetch and Render your page with the “Mobile: smartphone” option selected to double-check that Googlebot for smartphones renders your content properly.

As an SEO myself, I have been fully aware that you need to make sure that you’re not blocking .CSS or JavaScript files. Typically you do this in your robots.txt file, you can find mine here: https://www.billhartzer.com/robots.txt. That’s where your robots.txt file should be, and where you should find one. If it’s not there, then you need to put one there.

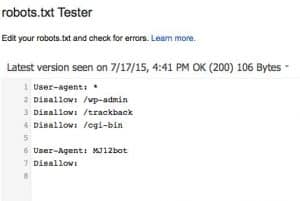

And here’s my robots.txt file, where I don’t appear to be blocking any .CSS or .JS (JavaScript) files:

As you can see above, there are no warnings or errors. So it appears that Google sees that I am NOT blocking any .CSS or .js files.

So, if I am not, and have not, been blocking .CSS or JavaScript files, then why is Google sending me a warning message about the fact that my site IS blocking these files? I only have two possibilities here:

1. Google has sent a false message, and they’re wrong. Well, that’s a possibility, they have been before.

2. I am, in fact, blocking a .CSS or .JS file on my site.

Looking into this further, I am blocking access to my wp-admin folder on my site, for security purposes. There in fact happens to be a common.min.js file in that wp-admin folder on the site. So, most likely that may be the culprit.

How did I find this?

Well, I went to google and did this search query: site:billhartzer.com/wp-admin/ which is a folder that I am blocking. Turns out that there IS, in fact, a .JS file there. So I am, in fact, blocking one .JS file. That’s related to a theme that I have installed, so I’ll need to update my robots.txt file so that I don’t block the wp-admin folder. Oh well. So much for security, huh?

Update

So, I went ahead and updated my robots.txt file to allow indexing of the wp-admin folder where the rogue .JS file is located. I could have actually “allowed” indexing of that file, by adding an “allow” command. But for now I’m going to see what effect it has on allowing the whole wp-admin folder to get indexed. I’m pretty confident with the security of my site, so I’m not too worried about allowing that directory to be indexed.

Also, I added the locations of my sitemap files to the robots.txt file, as well. So if you look at my site’s robots.txt file you’ll see those there as well.