I am currently working on a unique project with domain names that requires me to crawl hundreds of thousands of URLs. Essentially, in this particular project, I have a list of domain names. Let’s say, around 100,000 domain names. What I want to know, for this project, is which domain names are actually being used for live websites, which domain names are being redirected, and which domain names are registered only (they don’t have hosting set up).

I set off to find a tool that will do this quickly and efficiently for me. It turns out that that wasn’t an easy task. But, in the long run, the SEO community stepped up to the plate. I finally got the task done (sort of), but not completely to my satisfaction. Here’s what I found, and here’s what I learned in the process.

Like I mentioned, I really need to do only a few things:

- Take a list of URLs in a text file.

- Give that list of URLs to the tool (either copy/paste or upload the text file).

- Let the tool crawl the URLs and get the response code (file size would be good, too).

- Tell me, in a report (or exportable spreadsheet, .csv, etc.) the status of each URL.

That really seems like a pretty easy task. But, with the tools out there, and the tools that I currently use in my toolset, most seem to be not up to this particular task. In fact, many of the tools will do this but get too much information. I don’t need the title tag of the page, I don’t need the meta description tag of the page, and soforth. I just need to know what the response code of each site is.

So, I started with my initial list of domain names (this happens to be the zone file for one particular TLD Top Level Domain). I won’t go into details of how/why/what I’m doing with this data because that’s irrelevant right now. But nonetheless it is for a project I’m working on that will be public once I’m done.

Once I had the list of domain names in the text file, I actually had to make some changes and additions to the text file. I realized that website owners being the way they are, I can’t rely on just crawling a list of URLs like this:

http :// billhartzer.com

In fact, sometimes (oftentimes?) when you go to a domain name starting with just http:// it won’t resolve. Some sites aren’t set up properly with a redirect from the http:// to http://www version of the site. So, as a result, if I just crawled http://domain.com sites, I might get false information: I might think that a site’s not live but in fact it has a site on it. So, I had to take my list of 100,000 domain names and then expand it to crawl each of these versions of the site:

http ://

https ://

http ://www

https ://www

This list of about 100,000 domain names quickly grew to 400,000 URLs that I needed to crawl and get the response codes for.

My initial crawl started with Screaming Frog’s SEO Spider. I call myself an advanced user of Screaming Frog, and I’ve successfully used it to crawl a 10 million page site. So, that’s really no problem, I thought. But, it turned out that while it was able to crawl all of the URLs without any problems (and some tweaks to the settings and memory allocation), I ran into problems. When I went to export the data, the .CSV files were very large. And, the files had too much data. I only need response codes, and it seems as though there’s no way to turn off the gathering of title tags, meta description tags, and other useless data to me (in this crawl). While I can, in fact, export the response codes, that’s helpful. I can, for example, export all of the URLs with “3xx response codes”. But I can’t stop if from gathering too much data. And the dealing with large .CSV files proved difficult (even on an up to date Macbook Pro with 16gb of RAM.

Another curious issue that I ran up against using Screaming Frog for this particular crawl was the actual responses. It turns out that some of the domain names (when I spot checked several) gave Screaming Frog a DNS error as if no DNS has been set up for that domain. However, one is a live site with over 100,000 backlinks and is quite popular. So, I’m not sure what was happening and why I get a DNS error for sites that are, in fact, live sites.

Please note that I still use Screaming Frog’s SEO spider just about every day for other crawling tasks, just not this one in particular, which is unique. I really like the tool, and can’t do my job without it. I do recommend that they allow you to stop certain data from being gathered when you crawl (like title tags, for example), that would be a great addition to the tool.

So, with getting frustrated with Screaming Frog, I turned to the SEO community for recommendations. I actually got several, and these were all worth looking at, not necessarily in this order:

– Headmaster SEO (https://headmasterseo.com/)

– Outwit Hub (https://www.outwit.com/products/hub/)

– Scrapebox (http://www.scrapebox.com/)

– OnCrawl (http://www.oncrawl.com/)

– Deep Crawl (https://www.deepcrawl.com/)

– URL Profiler (http://urlprofiler.com/)

– lwp-request (http://search.cpan.org/dist/libwww-perl/bin/lwp-request)

– check-urls.sh (https://gist.github.com/davidalger/7441cc05378475d1f7795511d2d724ab)

What I found, though, using several different tools, is that when you crawl you won’t always get the same response code from websites. It turns out that while some crawlers show one response code, another tool might show a completely different response code. I am not sure which Googler said it, but I recall them saying “web crawling is difficult”, which I now completely understand.

If there are some tools that I should take a look at, let me know. If you have any other suggestions or recommendations, let me know over on Twitter:

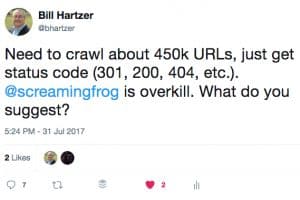

Need to crawl about 450k URLs, just get status code (301, 200, 404, etc.). @screamingfrog is overkill. What do you suggest?

— Bill Hartzer (@bhartzer) July 31, 2017