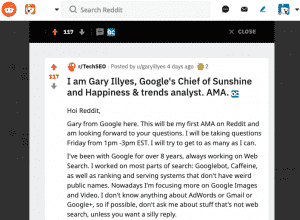

Gary Illyes, Google’s Chief of Sunshine and Happiness and Trends Analyst, recently did an AMA (Ask Me Anything) on Reddit. There were a lot of questions asked, and I’ve narrowed it all down to the key takeaways. I’ve pulled out the most important questions and answers, in my opinion. You can read the whole entire Reddit AMA with Gary here.

Hreflang Conflicting Statements

Hey Gary, you and /u/johnmu have conflicting statements on hreflang. John says they will not help with rankings and you have said they’re treated as a cluster and they should be able to use each others ranking signals. Can we get an official clarification on this?

Gary’s Response

This is an interesting question and I think the confusion is more about the internal vs external perception of what’s a “ranking benefit”. You will NOT receive a ranking benefit per se, at least not in the internal sense of the term. What you will receive is more targeted traffic. Let me give you an example:

Query: “AmPath” (so we don’t use a real company name ::eyeroll:: )

User country and location: es-ES

Your site has page A for that term in EN and page B in ES, with hreflang link between them.

In this case, at least when I (re)implemented hreflang, what would happen is that when we see the query, we’d retrieve A because, let’s say, it has stronger signals, but we see that it has a sibling page B in ES that would be better for that user, so we do a second pass retrieval and present the user page B instead of A, at the location (rank?) of A.

CTR and Adjusting Query, Repeat Visits

Google use interactions of searchers to alter what position certain results may hold? Those actions could include, but aren’t limited to;CTR, return and click another listing, adjusting the query, repeat search and repeat visit etc.

Gary’s Response

We primarily use these things in evaluations.

Mentions are Picked Up

You said that “mentions” are picked up, but not counted as links which makes sense. What is something these mentions might be used for?

Gary’s Response

Entity Determination.

Getting More than 1,000 Rows from Index Coverage Report in GSC

When will we be able to get more than 1k rows out of the Index Coverage report in Google Search Console? Will there be an API for this?

Gary’s Response

To the best of my knowledge that limit is a technical limitation…The whole point of moving to the new search console is to get away from a decade old implementation, and make the thing more accurate, faster, and generally more useful for all users. That will perhaps be reflected in the API, too.

Internal Linking Over Optimization Penalty

Is there an internal linking overoptimization penalty

Gary’s Response

No, you can abuse your internal links as much as you want AFAIK.

Overlooked by SEOs

In your opinion, what type of questions SHOULD we be asking? Is there anything that most SEO’s tend to overlook/not pay attention to?

Gary’s Response

Google Images and Video search is often overlooked, but they have massive potential.

How Does Google Measure Quality or Determine Relevance?

Is there anything you can share around how Google measures quality or determines relevance?

Gary’s Response

We have this massive doc that touches on those things:

https://static.googleusercontent.com/media/www.google.co.uk/en/uk/insidesearch/howsearchworks/assets/searchqualityevaluatorguidelines.pdf

Basically we have an idea about determining relevance, we ask raters to evaluate the idea, raters say it looks good, we deploy it. Relevance is a hairy topic though, we could chat about it for days and still not have a final answer. It’s a dynamic thing that’s evolving a lot.

Metrics Transferring E.A.T from One Site to Another

To what extent do metrics around E.A.T transfer, through the link graph, from one site to another

Gary’s Response

I guess that’s a little oversimplified, but yeah.

Syndication and Canonical Tags

If I syndicate content and the other site has a canonical back or it gets treated as the same page as the original because it is duplicate, do the signals like internal/external links to the content on the other site count for me which seems to be how it would be handled or is this a special case Google looks for and says hey that’s kind of fishy and likely paid, so don’t count those?

Gary’s Response

The page in a dup cluster that shows up in the results will see more benefits.

Google’s NLP API to Write Better Content

What is your opinion of using Google’s NLP API to write better content?

Gary’s Response

If you can generate content that’s indistinguishable from that of a human’s, go for it.

Mapping Content to the Buyer’s Journey

If a site maps content to the buyers journey, with pages for early in the buying process, middle, end, checkout, is it reasonable to speculate that Google sees the site as more authoritative on the topic than someone who just has a really popular product page?

Gary’s Response

No.

Content Across Multiple Pages

If a site has content around a subject across many pages, but not all pages are popular or get more traffic, can they still sent helpful signals that the site is trustworthy and authoritative on the subject?

Gary’s Response

Yes.

Web Accessibility a Direct Ranking Factor?

Will web accessibility be considered direct ranking factor for images and video rankings?

Gary’s Response

Unfortunately, no.

CrUX Data Being Used to Assess Speed?

Is CrUX data being used to assess page speed as a ranking factor?

Gary’s Response

I don’t remember where the data is coming from, but not our publicly available tools, of which we have many for reasons that are beyond me.

Variables that Impact How Many Bytes of Data Google Bot will Crawl and Index

What are the variables that impact how many bytes of data gbot is willing to crawl + index in search?

Gary’s Response

We have a max size limit in Googlebot, it’s relatively large, but I don’t remember the exact number. Other than that, we don’t have anything else that I know of. Googlebot doesn’t randomly abandon a connection if it already started receiving bytes, though, unless the connection is reset or something. And we definitely index whatever we got from Googlebot

Does RankBrain Use UX Signals?

Can you please confirm/deny whether RB uses UX signals of any kind?

Gary’s Response

RankBrain is a PR-sexy machine learning ranking component that uses historical search data to predict what would a user most likely click on for a previously unseen query. It is a really cool piece of engineering that saved our butts countless times whenever traditional algos were like, e.g. “oh look a “not” in the query string! let’s ignore the hell out of it!”, but it’s generally just relying on (sometimes) months old data about what happened on the results page itself, not on the landing page. Dwell time, CTR, whatever Fishkin’s new theory is, those are generally made up crap. Search is much more simple than people think.

RankBrain & CTR, Dwell Time, Bounce Rate

If RankBrain is trained on what users clicked on in the past, then isn’t it using something akin to CTR? Dwell Time and Bounce Rate imply a post-SERP interaction, but CTR doesn’t.

Gary’s Response

Verification or didn’t happen!

Country Targeted Setting in Google Search Console

To what extent does the “country targeted” setting in Search Console for a non-English speaking nation negatively affect a domain hack’s presence to people of another language in general google.com search?

Can Google understand that ccTLD domain hacks that cannot be set to “generic” targeting are not necessarily meant for the people or language of the domains ccTLD?

Gary’s Response

The way it affects its ranks is indirect I think. You have lots of gTLDs that are targeted to US in the result set where your .lk domain tries to show up, those results are relevant and on top of that they get a slight boost for being “local” (i.e. targeted through search console). Because you can’t get that boost with your domain for anything other than Sri Lanka, you are starting from a “penalty position” (in the sense of sports).

Are Website Features and Functionality a Ranking Signal?

Does Google also look at things like website features or functionality as a ranking signal?

Gary’s Response

Google in general yes, of course. If you have opening hours on one of your pages, it may show up in… umm… maps listings? Whatever they’re called. Google WEB search doesn’t care about those things though.

CTR, Dwell Time

If the algorithm isn’t tracking CTR, Dwell time, etc. how does Google know if a piece of content is successful? Or is this determined by on-page elements that Google deems to be good?

Gary’s Response

PR answer: we use over 200 signals to rank pages. Gary answer: we use over 200 signals to rank pages, and some of those are even announced and you mentioned them.

Rel=Author

When you said “Google doesn’t need rel=author because it’s smarter than that,” what did you mean? What signals does Google currently look at to analyze author authority? What about for unknown authors?

Gary’s Response

I’m assuming we meant that we can see the bylines….I think that’s missing some context (which i don’t remember anymore…Unlikely I’ll remember the context, those AMA’s were super fast paced and support annoying, but i do think i meant bylines from pages.

Stopping Googlebot from Crawling

We have search/tesaer URLs like this.

https://example.com/typeofproduct/selection/?productfind=bluewidget or https://example.com/loading/?¶meter1=X&AB_source=XYZ&addPixel=yes etc.

They are noindex. From server logs I can see Googlebot crawling all these pages like crazy and since our site is faily large I’d like our friend to focus on more important pages.

I tested rel=”canonical” which can be only partially right in this case but results are the same thing. Crawling and crawling.

Is blockin via robots.txt the only option?

Gary’s Response

Robots.txt is respected for what it’s meant to do. Period. There’s no such thing as “sometimes can be ignored”….You can also use the GSC parameter thingie, but you can blow your leg off with that canon.

The Future of AMP

What are your thoughts on the future of AMP and how it will evolve with Search?

Gary’s Response

Honestly, I don’t know. For users it’s excellent if the landing AMP is properly implemented and offers the same functionality as its parent, however that’s often not the case. For me as a user that’s frustrating, so I’d hope the search-amp team will focus more on ensuring that we only show AMPs in the results if they’re standalone or are on par with the parent.

Marketing & Business Jargon a Negative Factor?

Is marketing/business jargon a negative factor? If not, can you make it one?

Gary’s Response

No and No.

Noindex, Follow Pages and 404s

Can you clarify Google looking at a “noindex, follow” page as a 404 eventually?

Gary’s Response

If the page is noindex, it’s not “404 eventually”, it’s just not indexed. If you add “follow” directive to the page, Googlebot will discover and follow the links. That’s it, I think….Unless we stop visiting the page altogether, which if it’s linked well likely won’t happen (e.g. it’s scheduled because it’s linked), we will follow the links.

Instant Indexing

Will the instant indexing available through Wix and apparently soon through yoast ever be available to everyone, or will it only ever be offered through certain CMS / Plugins / Services?

Also given that the former indexing tools, like fetch & submit in search console for example, had to be curtailed due to abuse / overuse, how will you handle the use of these instant indexing offerings?

Gary’s Response

Currently we’re testing our own limitations with the indexing api as well as the usefulness of pushed content vs pulled. We don’t really have anything to announce just yet… As for yoast, I don’t want to step on anyone’s toes (e.g. our Indexing API PM), but it may be that he over-announced some things.

Google PageSpeed Insights

Is Google’s Pagespeed Module still relevant?

Gary’s Response

the server module? yes, that is always relevant imo

Removing Pages from Your Site

We are looking to remove the URLs in batches over a six month period…A small proportion of the URLs will be 301 redirected to relevant remaining pages but the majority will be orphaned from the IA and 404ed….So basically over a 6 month period we will be adding 30,000 404 errors to the site.

Do you foresee any potential issues with this approach?

Gary’s Response

Removing pages will have ranking drop effects in most of the cases. That’s because:

1 those pages might have gotten traffic that you’re gonna lose.

2 those pages might have linked to other pages, so losing them will orphan or remove some PR from those target pages.

Batching sounds like a good idea; you can do damage control much easier.

Delay in New Sites Ranking

Every time I’ve uploaded a new site to GSC it’s always taken about a week for google to start picking up the website and ranking it for keywords/brand name. By ranking I don’t mean it’s jumped straight to first but it’s hit the top 100 before eventually hitting the first page over the course of a month or more.

Gary’s Response

Yeah, there are anti-spam mechanisms that can do that to your site, but you really have to look spammy for them to trigger….Also, no, I’m not going to talk about them for obvious reasons.

Support for es-419 hreflang

Do you support “es-419” hreflang (to target LATAM)?

Gary’s Response

No.

Domains Being Damaged and Not Repairable

Can a domain be damaged in Google’s algorithm to the point that it’s not repairable? I don’t mean manual penalties, but for some reason the domain won’t be able to rank given its history of sketchy content / behavior?

Gary’s Response

No.

Is Google’s Image Recognition Technology a Relevancy Signal?

Is Google’s image recognition technology used as a relevancy signal within the document selection algorithm within the index?

Gary’s Response

Yea.

Secret SEO Recipe

Do you have a secret seo recipe that you would never share but sometimes daydream about how much $ the rankings could generate?

Gary’s Response

Yes.

Featured Snippets in More European Locales?

Do you see featured snippets rolling-out in more European locales in the next two years?

Gary’s Response

I would sure hope so, they can be great for both the site and the user, but unfortunately it’s not just technicality where we roll them out. I’ll let your imagination roll for what may prevent us from rolling them out globally.

301 and 302 Redirects

For redirects and value being passed with 302s, how does that work? I get that if it’s in place for a while then things will be consolidated, but let’s say I have an old page and a new page, a 302 is saying keep the old page indexed for now and the new page is also indexed, so it seems the value is split between the 2 and not consolidated in this case. Can you clarify how this works?

Gary’s Response

I thought we fixed that and now 301 and 302 are pretty much the same.

Folder Level Signals

Do you have any folder level signals around content, or are the folders used potentially more for crawling/patterns?

Gary’s Response

They’re more like crawling patterns in most cases, but they can become their own site “chunk”. Like, if you have a hosting platform that has url structures example.com/username/blog, then we’d eventually chunk this site into lot’s of mini sites that live under example.com.

Redirects that Go Away

For redirects, what happens if a you 301 redirect a page and say a year later or 5 years later that redirect is no longer in place but Google tries to crawl the old page, are the signals no longer consolidated?

Gary’s Response

By that time the target had enough signals and we stopped trying to push the signals since we already done it. At that point source will start accumulating its own signals again.

Links from 2 Different Pages on Same Domain

is it valuable to have 2 links from 2 different pages on the same domain? Would this value if relevant be the same as having 2 links on separate domains or does the second link provide no extra value?

Would also be great to hear your thoughts on the future of SEO and whether you think links will continue to play any part in the future or if it will take the route to ranking websites by the number of user/audience approach with the relevancy of course.

Gary’s Response

1 yes, they’re both counted.

2 i really wish SEOs went back to the basics (i.e. MAKE THAT DAMN SITE CRAWLABLE) instead of focusing on silly updates and made up terms by the rank trackers, and that they talked more with the developers of the website once done with the first part of this sentence.

Ranking Signals – Any You Care To Mention?

The CEO of Google during his testifying to Congress about user data (how the search works) said that there were 200 ranking signal factors, but he only mentioned 3 of them: relevance, freshness, popularity. Would you like to mention several more ranking factors? <3 So I will be able to implement them on my website and get better rankings.

Gary’s Response

Country the site is local to, rankbrain, pagerank/links, language, pornyness, etc.

Updates to Requirements for QAPage

With the update to requirements for QAPage structured data for enhancements, when can we expect to see we expect to see FAQ Page structured data built into actual enhancements. Are you handing out manual actions for websites that use QAPage structured data without allowing for users to submit answers?

Gary’s Response

I don’t know of plans that involve QAPage SD other than what’s already deployed. Also not aware of manual actions, but it’s probably something we should keep an eye on, so I’ll send this to the SD manual actions team.

Mobile First Algorithm and Poor Page Speeds

If a site has not been notified that they are on the mobile first algorithm and the site has very poor page speeds, how big do you think the loss in rankings/organic traffic would be if the site is moved over prior to page speed issues being fixed?

Gary’s Response

Probably none. We already saw your pages are slow as a rheumatic snail before moving it to MFI, so you’ll likely keep the old rankings.

Backlinks and Deindexed Sites

If you get a backlink and then that website gets deindexed somehow, does Google still count that backlink in any way? Or let’s say some unindexed site spams your site, will it affect it in anyway?

Gary’s Response

No.

Negative Link Velocity

Many websites I help have a negative link velocity due to shady link deals and dropped links.

Can you clarify / confirm:

1 That a negative link velocity is something that should be looked at and addressed? (If a website has lost 250 referring domains in the past 6 months, then that would be indicate that the website is not performing well)

2 How long does Google’s historical records go back? (What if a website had 20000 referring domains in 2009 but now only has 300 referring domains. How will that compare to a brand new domain that has acquired 300 referring domains in the past year?)

3 Short of changing the domain URL (and perhaps redirecting the old domain), is there a way to address the loss of hundreds of referring domains?

Gary’s Response

1 Generally that would affect pages not the whole domain, as they would lose pagerank.

2 Haha… no.

3 Get new ones?

I would love if you didn’t focus that much on links and made up terms like link velocity.

Embedding Maps on a Landing Page

When we embed a map on a landing page, search console can’t crawl it. Is there any negative effect with the rankings?

Gary’s Response

No.

Googlebot Australia

What will happen if I block Googlebot appearing from the USA but allow Googlebot from Australia? Will my content written specifically for Aussies be indexed?

Gary’s Response

There’s no Googlebot AU afaik.

Nofollowed Links

Do you look at specific sources for nofollowed links, and use that to influence the value of other links?

Gary’s Response

nofollowed links don’t have value

Canonical Tags and Canonical Signals

In light of the recent announcement that search console will be showing the stats for canonical URLs: Suppose there is a website which doesn’t have particularly good canonicals and Google has been forced to choose canonicals for it (and in some cases picked the wrong one). Is there anything in particular which would trigger Google to re-evaluate that decision?

Gary’s Response

The strongest canonical signal is rel=canonical. Implement them, take control over your content and site!

Multinational Website with UGC

Let’s imagine you have a multinational website having user-generated content, like Twitter or Facebook. Each tweet / post is written in a particular language. It can be English, German, Spanish, whatever. How to ensure these websites can reach the international audience through SERPs? Assuming I use hreflang tags, do you think translating user interface language is enough to make such websites successful in the international market?

Gary’s Response

The pages will rank on their own for user queries just fine. FB, Twitter, etc. they all do quite well in most languages.

Redirecting Domain and Discontinued Product

I have two products, each with its own domain. One of the products has been discontinued and I 301 redirected all the traffic for its domain to the other domain.

Gary’s Response

I guess it will slightly help it.

Linking Blog Posts on Same Topic

If I have several blog posts about the same topic, should I link them together?

Gary’s Response

It’s good practice, but i don’t think you’ll see more gain than that from pagerank.

Slow Times to Digest Old Domains Redirected

“Recently I noticed very slow times to digest old domains redirected. Having cases where the old site even 301ed and migrated from gsc still covering most of the SERPs” Q. Why does this happen & how can we avoid it?

Gary’s Response

You don’t need to do anything. We’re simply surfacing the old URLs to… Not confuse users i guess? Honestly, that URL we show in the results are sometimes fairly useless, maybe we should test again what happens if we remove them.

JSON-LD and Schema

Through JSON-LD and Schema, I annotate my texts using “sameAs” properties that point to dbpedia (and other public knowledge database) entity URLs. Will these “sameAs” contents be used by Google to better understand the topics discussed in the web page and how well the page matches with the query?

Gary’s Response

Likely. All SD is used in some way so, yeah, likely

Google and Conversion Data

Google is smart enough to understand conversions on some website…Is the percentage (or a number) of conversions something that Google would count as a ranking factor (as a good UX signal)

Gary’s Response

Nope.

The User Journey

One of my pages, even if it’s high quality in terms of content and user experience, ranks very poorly. The page features a game that users can play. However, when they want to play, they are taken through the game launcher and registration form, both on separate subdomains. So the user journey is something like this: Step 1: User lands on a webpage on domain.com, reads and wants to play -> Step 2: user is redirected to a launcher.domain.com -> Step 3: user is redirected to registration.domain.com.

My question is: could this user journey actually be interpreted as a bounce and associated with low time on page, since the user lands on the main domain, and then goes through 2 different subdomains? Could this in turn skew user metrics and influence rankings in a negative way?

Gary’s Response

No.

Noindex Directive in Robots.txt

Why doesn’t Google want to officially support the Noindex directive for robots.txt? Webmasters try to use robots.txt for that, Googlebot respects it, so why not make it official?

Gary’s Response

Because robots.txt was not invented to control indexing. It was invented for controlling crawling. The fact that noindex works at the moment is a happy accident for you, unhappy for me…If we try to index disallowed pages without crawling then those pages either shouldn’t be disallowed and just have a noindex, or shouldn’t be disallowed at all. We try to index disallowed pages without crawling them if we have evidence that they are likely important for users.

Pedro Dias

Gary, I heard Pedro Dias worked at Google. Can you confirm?

Gary’s Response

Confirmed. He was when he was at Google and still is a good friend of mine.